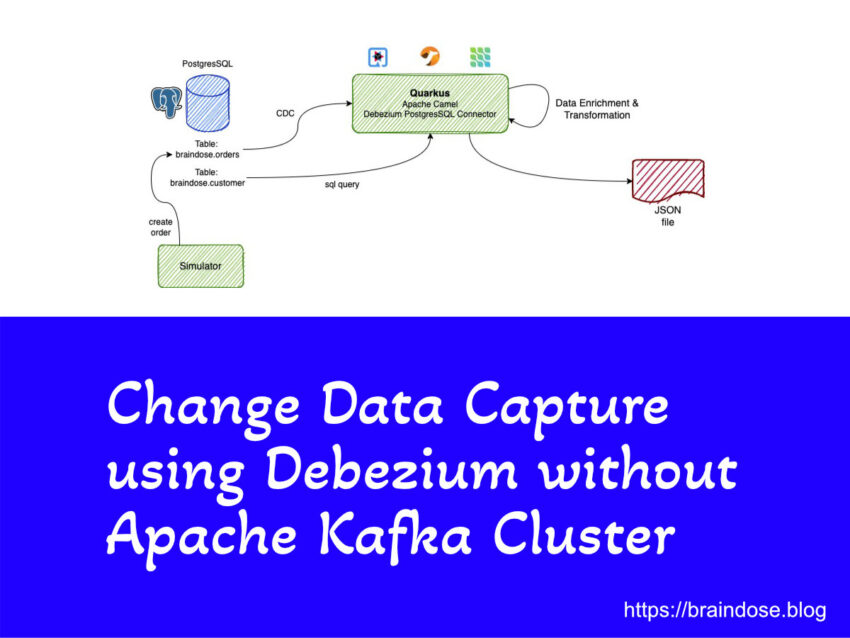

Debezium is developed base on Kafka Connect framework and we need Apache Kafka cluster to store the captured events data from source databases. Sometime we do not require the level of tolerance and reliability provided by Apache Kafka cluster, but you still need Change Data Capture (CDC). This is where Debezium Engine come into the picture.

Category: Containers

Monitor and Analyze Nginx Ingress Controller Logs on Kubernetes using ElasticSearch and Kibana

We are going to learn how to deploy and configure Fluent Bit (a sub-project of FluentD) to capture the Nginx Ingress Controller logs on Kubernetes and stream the formatted logs to ElasticSearch. We can then perform the logs analytics using Kibana.

Running ElasticSearch and Kibana on RPi4 for Logs Analytics

Let’s look at how can we quickly deploy a standalone ElasticSearch and Kibana using Docker Compose to start monitoring application and system logs

Automate Kubernetes etcd Data Backup

When come to operating Kubernetes, we need to ensure the cluster backup is one of the key operation as part of our backup strategy. We need to properly backup and secure the application data which is typically stored in persistent volumes. On the other hand, we need to backup the etcd data because it is the operational data store that is used by Kubernetes in order to operate accordingly. In this post we are looking at how to schedule the etcd data backup using out of the box Kubernetes features.

K8s on RPi 4 Pt. 5 – Exposing Applications to the World

There are a few approaches to expose your applications to the world outside of Kubernetes cluster. One of them is using Ingress Controller. We are going to learn how can we use Nginx Ingress Controller to expose container applications running on top of Raspberry Pi Kubernetes cluster.