Table of Contents

- Overview

- Serverless vs Function

- History of Serverless

- Benefits of Serverless

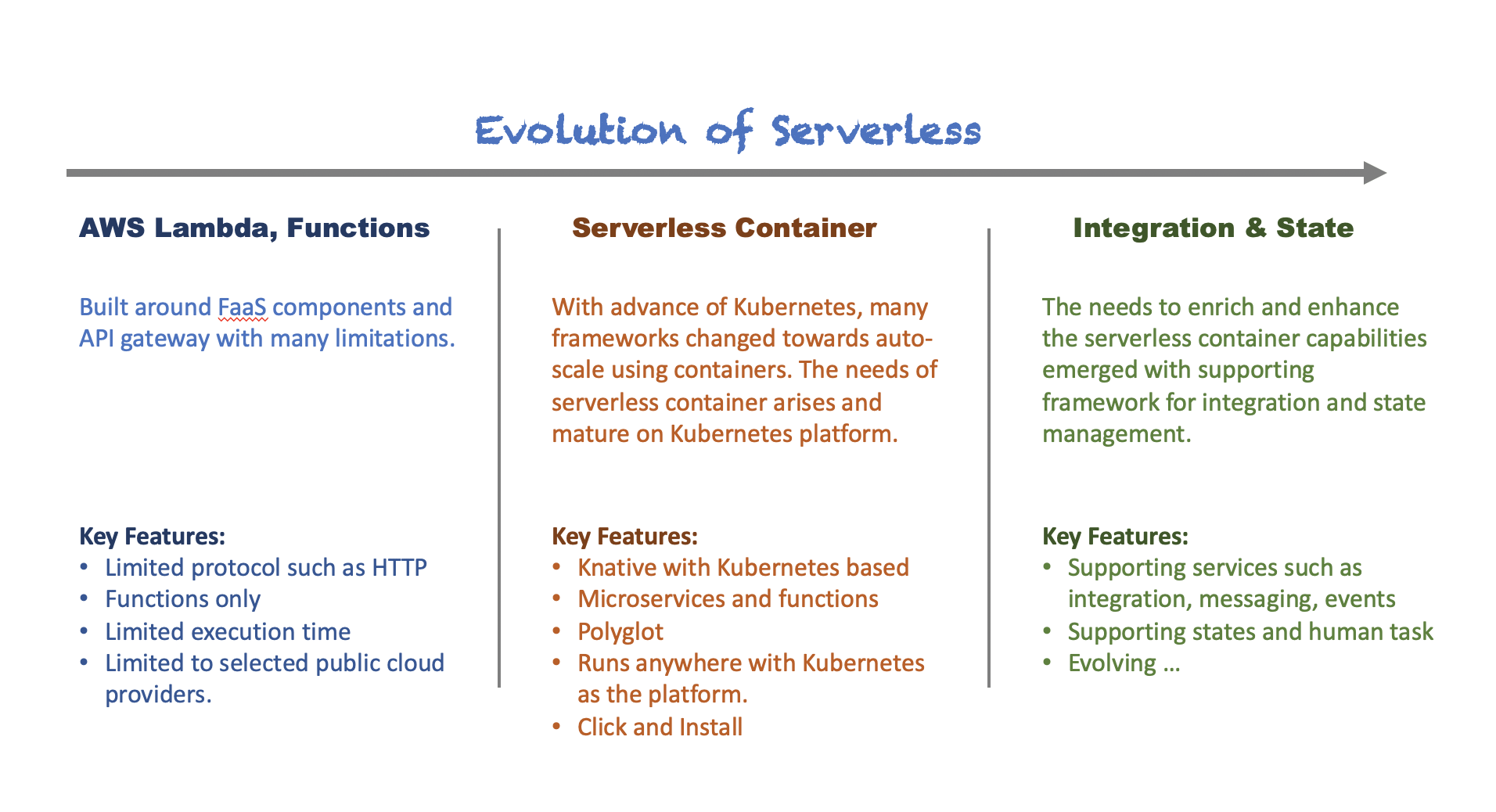

- Evolution of Serverless

- So What is Knative?

- Benefit of Knative

- List Of Knative Offerings

- Summary

- References

Overview

There are many articles been written about serverless but they failed to provide a clear definition and differentiations between Function, Serverless and Knative. I was puzzled when I was asked the same question but not able to provide a precise answer.

So I decided to dig into this information and here they are. We will first look into what is Serverless and Function, and then dive into what’s make the Knative shines.

Serverless vs Function

So what is Serverless and Function, really?

Let’s borrow from wikipedia for this definition.

Serverless computing is a cloud computing execution model in which the cloud provider runs the server, and dynamically manages the allocation of machine resources. Pricing is based on the actual amount of resources consumed by an application, rather than on pre-purchased units of capacity…

Function (or commonly referred to FaaS) definition borrowed from wikipedia…

Function as a service (FaaS) is a category of cloud computing services that provides a platform allowing customers to develop, run, and manage application functionalities without the complexity of building and maintaining the infrastructure typically associated with developing and launching an app. Building an application following this model is one way of achieving a “serverless” architecture, and is typically used when building microservices applications.

I bet you are scratching your head right now. Does the above definitions satisfy your thirst? You are not alone.

We all know Serverless, Function and Knative are meant to provide modern application platform for consumption based, faster start-up, lightweight, cost effective, event based, modular and etc. But how do they relates to each other and how they are different from each other. What do I need to know in order to help me to craft or choose the right solution for my organization needs.

On Cloud Programming Simplified: A Berkeley View on Serverless Computing, led by Ion Stoica from University of California at Berkeley, they manage to put it nicely and clearly in their paper.

The serverless computing name is presumably stuck because it suggests that the cloud user simply writes the code and leaves all the server provisioning and administration tasks to the cloud provider …

While cloud functions – packaged as FaaS (Function as a service) offerings – represents the core of serverless computing …

… cloud platform also provides specialized serverless framework that cater to the specific application requirements as BaaS (Backend as a service) offerings.

Put simply, serverless computing = FaaS + BaaS.

Serverless function (or function or cloud function) is unambiguously equals to the FaaS, which provides the capabilities to allow the application developers to define, design and implement the application logic as Function following the framework.

When the Function is ready, it needs to be deployed into a platform that can run, scale and manage them, provides the necessary resources required by the Function to operate properly, which we refer this as Serverless platform (or BaaS as per described above).

Now, why is it important to know the differences? The reason is simple because it will influence your decision on crafting the right solution for your modernized application platform and to help you decides the right platform and the right technology that meets the requirements.

History of Serverless

Before we proceed further, it is always the right move to look back at the history of serverless in order to better understand serverless.

The Serverless Framework is a free and open-source web framework written using Node.js. Serverless is developed by Austen Collins and released into public in 2015[1]. Serverless is the first framework developed for building applications on AWS Lambda, a serverless computing platform provided by Amazon as a part of Amazon Web Services.

Currently, applications developed with Serverless can be deployed to other Function as a Service providers, including Microsoft Azure with Azure Functions, IBM Bluemix with IBM Cloud Functions based on Apache OpenWhisk, Google Cloud using Google Cloud Functions, Oracle Cloud using Oracle Fn, Kubeless based on Kubernetes, Spotinst and Webtask by Auth0.[2]

[1] I will be using non-container based serverless in the rest of this article to refer to the Serverless framework introduced by Austen Collins. I will cover more on serverless container later.

[2] This list of supported serverless platforms is referring to the implementation following the original serverless framework introduced by Austen Collins. This Serverless framework is to allow deployment of piece of codes as Function directly but it is not designed for container applications. There is a Kubernetes based serverless platform called Knative with mission to empower the microservices for cloud vendor-free implementation which I will introduce later.

Benefits of Serverless

The decision of whether to choose serverless as the platform of choice for our business, not only depends on the technology capabilities provided, but it is crucial to understand that our business will be able to ripe the benefits of serverless.

Cost Saving

Serverless allows business to deploy and run applications as Functions, obviously. The Functions only consume server resources when they are started and running whenever there is traffic, and they tend to consume much less server resources both CPU and memory compared to traditional applications. Because of the smaller amount of resources required and the nature of fast start-up, serverless applications tends to be used for the use case of IoT and events based business scenarios, where speed and resources consumption is the number one consideration. For these type of use cases, it does really help companies to save a lot of money.

The Serverless platforms are usually provided by the public cloud providers because they have the right resources to provide on demand server resources allocation and management, for huge corporate audiences, with most cost effective manner. Companies running their serverless applications on this public cloud providers are charged with pay per used model. This helps to prevent under-provisioned and over-provisioned and provides huge cost saving to companies whose applications are dynamics and the usage of the resources is hard to predict.

The scale and complexity of creating and maintaining this type of Serverless platform environment is not cost effective for corporate consumers. You might ended with under-provisioned or over-provisioned situation because when the platform usage is intended for internal, it is always raise the question and challenge of how much server resources is the right figure to start with and to maintain. This is the reason why we are looking at Knative at the later part of this article if companies wish to host and manage their own serverless platform environment.

Empowering Developers

Developing applications as Functions puts the infrastructure resources concern behind developers mind is the huge advantage for application team. Most of the time, serverless platform also provides the supporting services such as security, scaling, storage, load balancing and etc.

Developers can now focus on enhancing and enriching the business applications instead of doing something they are not specialized such as security. Typically, serverless platform providers also provides numerous popular supported programming frameworks out of the box.

Developers do not need to learn new programming skills in order to embark into Function implementations. It is assumed that the serverless service providers will provides range of programming frameworks to choose from, but in reality this might not be the case. The idea behind this is also it enables application team to have the flexibility to decide which programming framework is suitable for the business applications nature.

Quicker to Market

One of the most compelling reason is to be able to deliver business capabilities faster to market so that companies can compete well with the ever changing market. Each Function or smaller groups of Function can be implemented, tested and rolled out independent of each other and this shorten the time to roll out new business capabilities into the production.

Evolution of Serverless

As briefly covered in the Serverless History, the non-container based serverless framwork starts to gain popularity in AWS Lamda implementation, with Functions – small snippets of code that is running on demand.

With the advancements and popularity of Kubernetes and containers, people started to realize the same serverless traits and benefits can be applied to microservices and containers. With serverless container, it is no longer limited to Function runtimes, we are now able to run piece of codes as containers, leveraging the Kubernetes platform to auto-scale, route traffics and manage the serverless applications.

This is where the Knative comes in, a Kubernetes based serverless container platform.

But the destination of this evolution journey is when serverless eventually evolves to handle more complex orchestration and integration patterns, combined with some level of state management.

For the most part, serverless means stateless applications, but many solutions are not being created to help with orchestration to re-create well-known integration patterns, but serverless. Eventually serverless becomes essentially another feature of your platform as a service, since in practice most enterprises will be running a combination of serverless and non serverless workloads. Microservices, monoliths or functions, all running as containers, giving organizations the ability to choose the best tool for the job, when needed.

So What is Knative?

Knative is a Kubernetes-based platform to build, deploy and manage modern serverless workloads. Kubeless is another Serverless solution for Kubernetes platform. Comparing between Knative and Kubeless, both are Kubernetes based serverless platform, both are modular approach. The major different is Knative is designed both for cloud native applications (containers) and Functions, while Kubeless is mainly designed for Functions, in another words, Kubeless is a non-container based serverless platform.

Knative is using Kubernetes and Istio technology to build, deploy and manage the cloud native applications. It is designed and built around 3 major concepts to provide a modern serverless platform on top of Kubernetes.

- Build – A flexible approach to build source code into containers. Earlier versions of Knative included a build component. That component has been broken out into its own project, Tekton Pipelines.

- Serving – Builds on Kubernetes and Istio to provide rapid deployment and automatic scaling of serverless containers through a request-driven model for serving workloads based on demand.

- Eventing – A system that is designed to address a common need for cloud native development and provides composable primitives to enable late-binding event sources and event consumers.

Benefit of Knative

Knative is born to address the gap between microservice (or container) and serverless. Essentially it is not logical to compare between Knative and non-container based serverless. They are both for different needs and situations. However, the advantages of running Knative serverless does addresses many limitations of today non-container based serverless platform, on top of the Benefits of Serverless that I have covered earlier.

Support for Container

One of the key benefits of running your serverless workloads on Knative is the support of container. You can have a common Kubernetes platform to run all your serverless workloads, microservices workloads, cloud native, monoliths and many more.

The side benefit of being able to run all the different workloads on one single platform is the reduction of maintenance effort and learning curve for your operation team.

Container support also provides the consistent development and application delivery experience and processes, provided your application team has already been doing containers.

Not forgot to mention that there are many programming languages or frameworks that have been supported in container image.

Cloud Providers Vendor-Free

Knative is an add-on on top of Kubernetes. This provides a cross platform solution for serverless workloads where you can deploy your workloads on any cloud provider as long as there are Kubernetes running. You are no longer being locked down by specific public cloud providers anymore.

This also enables regulated industries consumers such as governments and finances to have the opportunity to design and run their business application following the serverless approach and run those serverless workloads in their own datacenters.

Operator Support

Knative is Operator enabled, this means to be able to deploy, run and manage your serverless applications, you just need to use Operator to deploy the serverless capability on top of existing Kubernetes platform. Companies now can choose within the Kubernetes platform itself when and where they should deployed the serverless capabilities.

List Of Knative Offerings

The following is the list of Knative provided by different vendors pull from the Knative official website. I will not attempt to do the comparisons here.

- Gardener: Install Knative in Gardener’s vanilla Kubernetes clusters to add an extra layer of serverless runtime.

- Google Cloud Run for Anthos: extend Google Kubernetes Engine with a flexible serverless development platform. With Cloud Run for Anthos, you get the operational flexibility of Kubernetes with the developer experience of Serverless, allowing you to deploy and manage Knative based services on your own cluster.

- Google Cloud Run: A fully-managed Knative-based serverless platform. With no Kubernetes cluster to manage, Cloud Run lets you go from container to production in seconds.

- Managed Knative for IBM Cloud Kubernetes Service: is a managed add-on for the IBM Kubernetes Service that enables you to deploy and manage Knative based services on your own Kubernetes cluster.

- Openshift Serverless: on OpenShift Container Platform enables stateful, stateless, and serverless workloads to all run on a single multi-cloud container platform with automated operations. Developers can use a single platform for hosting their microservices, legacy, and serverless applications.

- Pivotal Function Service (PFS): is a platform for building and running functions, applications, and containers on Kubernetes. PFS is based on the riff open source project.

- TriggerMesh Cloud: A fully-managed Knative and Tekton cloud-native integration platform. With support for AWS, Azure and Google event sources and brokers.

Summary

I hope you have a better idea of Function, Serverless and Knative now. I hope now you are able to answer some of the questions that maybe asked to you by your superior, colleagues or customers. I hope this article help in your solution journey to choose the right approach for your company.

Let me know what do you think?

References

- Cloud Programming Simplified: A Berkeley View on Serverless Computing

- Examining The FaaS on K8S Market

- How Knative Can Unite Kubernetes and Serverless

- A Serverless Function Example: Why & How to Get There

- Serverless Functions: Your Website New Best Friend

- What is Knative?

- Knative Crowds out Other Serverless Framework (and other CNCF Survey Takeaways)